A term that is increasingly appearing in the news is Artificial Intelligence (AI), and I think it is an area of technology that would have fascinated my parents, and although they are no longer here to enjoy it, I am trying to figure out how I would have explained it to them.

Computing

Actually, the term AI can be used for several things and there are religious debates about what it is and what it is not; but that is not what this writing is about, but a simple way of transmitting its basic concept and its potential.

AI is a branch of computer technology that seeks to make the most of the power of computers, not only to store and analyze huge amounts of information, but also to explore and decide the best strategy to solve the problem, since it works dispassionately, without preconceived ideas, without intuition, without getting bored or tired.

Better than humans

I just mentioned five aspects in which the computer helps us process information better than humans would:

- I said dispassionately because the computer has no preference over what type of data it is processing; it can analyze both photos of cute kittens, and of horrible murders without saying awe!, or eww!

- Nor does it have preconceived ideas and will not try to favor any specific response, because it is totally oblivious to the human expectations.

- Nor does it have intuition that would make it incorrectly assume a certain theory or ignore the other one, but it will examine comprehensively every piece of information to make its decision.

- The fourth attribute is that it never gets bored; we, humans, tend to start a task with much excitement, paying close attention to detail, but sooner or later, the novelty is gone and we begin to skip some steps that seem unnecessary.

- And the last feature is that it never gets tired, and therefore, it does not make, out of fatigue, common human mistakes, such as stop paying attention and just automatically process (ironic, right?), like driving in automatic mode.

So, based on this knowledge, we know that a computer can perform an analysis with much more precision than a human being, but why do we now call it AI?

Well, now that we know how well computers can process information, we will focus on understanding this novel approach to computing.

Simple AI

Let’s start with a seemingly very simple problem. I would like to present a picture to my computer, and let the computer tell me if it is a picture of a cat or a dog. Any two-year-old child could solve that problem in a jiffy, but if we get the computer to solve that simple problem today, tomorrow we can ask much more complicated questions.

The traditional system

How was the problem solved before the era of AI? Well, a human being had to identify some known features about cats and dogs, such as the shape of the ears, the shape of the nose, the shape of the face, etc., and then write a program to analyze the image, look for those traits and decide which animal they correspond to.

In other words, in the traditional method, humans decide what logic to use to differentiate the animals and instruct the computer on what steps to follow to achieve the identification. So, even though we had very powerful computers, the traditional system still depended on human beings making all the decisions and using the computers only to speed up the process.

When I wrote my first program to recognize images, I used some graphic libraries and it took me a couple of weeks to complete the first version of the program.

It was a lot harder than I had originally thought, but the worse part was finding out that the program failed as many times as it succeeded, in other words, its accuracy was about 50%. During the following weeks I spent some time trying to figure out why the program was failing to recognize the images, and kept improving the logic of the program, and adding more and more conditions, to the point where the accuracy improve to slightly better than 70%. Even then, due to the great variability between the different types of cats and dogs, and the quality of the pictures, the program became very complex, and despite of which, it was wrong three out of ten times.

Artificial intelligence and Machine Learning

The current version of AI uses a method called Machine Learning (ML) and, even though both terms are different, for the most part, they can be used interchangeably, and we will do so in this article.

AI uses a much older but effective method; to some extent one could say that it is the same method that humans use to learn. How does the baby learn the difference between a dog and a cat? Normally one of the parents or siblings points to an animal, live or in a book, and says “ca-a-t”, “ca-a-t” or “do-o-o-g”, “do-o-o-g”, and sooner or later, after some mistakes, and adequate reinforcement, that boy or girl will not be wrong again.

Training

Although a bit more complex, that’s practically what AI does; the computer is shown a picture and it is told “cat”, and another picture, and again it is told “cat”, and so on for hundreds of times. Then the same is done with pictures of dogs. During that period, which in AI is called training, no other instruction is given to the computer, except to view the pictures and to receive the correct answer. The computer, meanwhile, is analyzing all the possibilities of cats and trying to find similarities that enclose the “cat” concept, developing diverse and contrasting theories. For example, it may have initially concluded that all cats are brown until you showed it a picture of a white cat. Babies have done a very similar analysis, but we probably didn’t think of it that way.

Validation

Then we enter the validation period, perhaps comparable to the terrible twos, when the child begins to ask if there are white cats, or why the eyes of the cats have vertical pupils, etc. In AI, this period comprises of another series of photos of cats and dogs, marked accordingly, but now the computer will no longer try to learn something from the photos, but it will test the different theories it developed during training and see which one yields the best results.

Model

Once the computer identifies the best solution, it creates a model, which can be described as the set of rules that can be applied to a problem to determine if matches certain pattern. Once the computer has found the best model for cats, it is ready to identify cats in any picture. But, the computer will never tell you that something is a cat, instead, it will say something like the picture, or object, matches a cat in 99.232% and a dog in 6.032%.

Methodology differences

As you can see then, both in traditional computerized solutions and in AI we use computers to look for solutions, but the biggest difference between the two methodologies is that in the first one the computer was fed with the problems and the logic to solve them, as opposed to the AI method that feeds the computer with enough problems and correct answers, until the computer finds the best way to solve.

Accuracy differences

The other big difference that hit me personally, is that, with the traditional methodology I was able to achieve a positive result between 50% to 80% of the time, but once I switched my program to use AI the accuracy immediately jumped to over 99%.

Future

Simple, right?

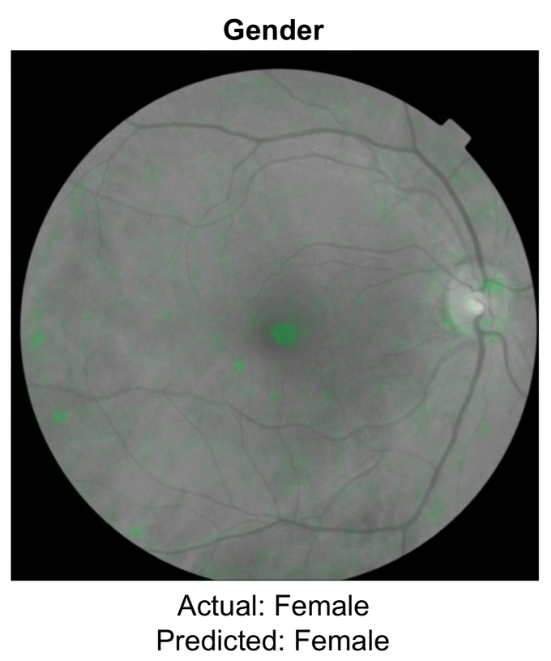

Well not so much. AI is already doing things that are beyond our understanding, for example, after training to find tumors in photos of the optic nerve of thousands of patients, the computer did something unexpected, correctly predicted the gender of the patients. The researchers have gone back to look at the photos, and so far they have not been able to find out what the basis was for the computer to make such determination.

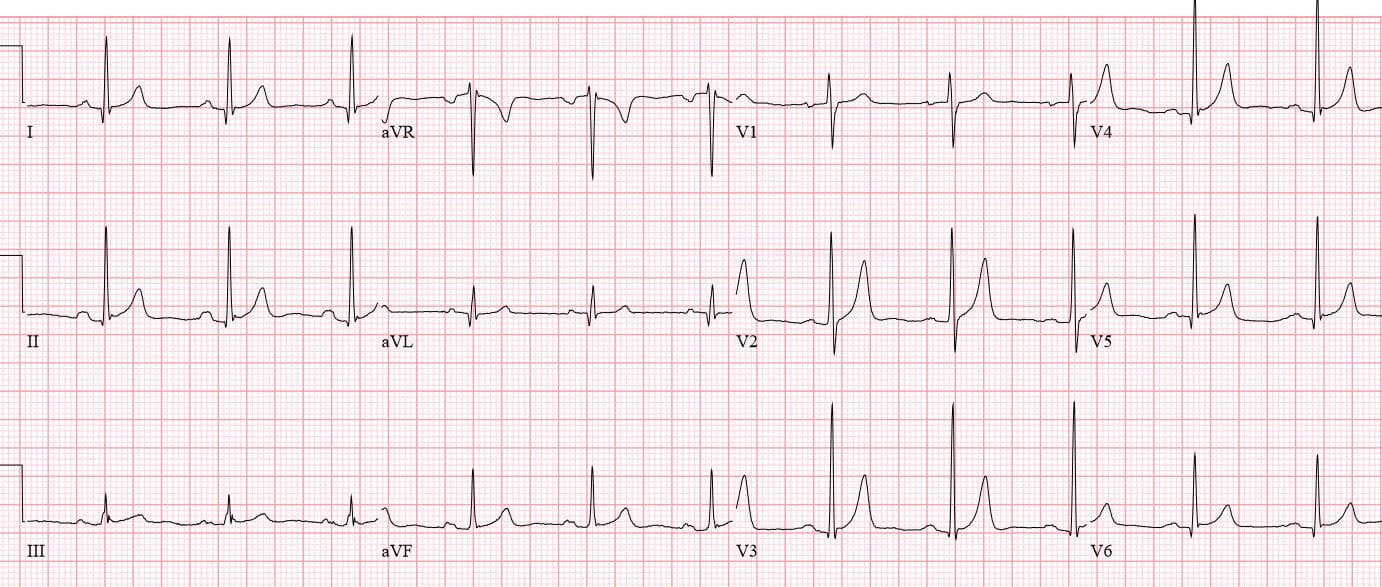

Just today I read about another case, in which researchers fed a computer the electrocardiograms of thousands of patients and the computer correctly predicted who would die in the following year; and researchers are still scratching their heads; they, who have analyzed electrocardiograms for decades, cannot identify what pattern the computer relied on to make its prediction, and make it correctly.

Anyway, it is an exciting topic, I spend many hours using and learning new things about AI, and observing its fascinating predictions; I wish my parents could have seen it.